Record the oom error of Kafka once:

This is the case. I installed zookeeper and Kafka on my win10 for debugging.

The first start-up is OK. Both the consumer and production sides can operate normally.

Then, I tried to cycle the production data with code, and Kafka hung up.

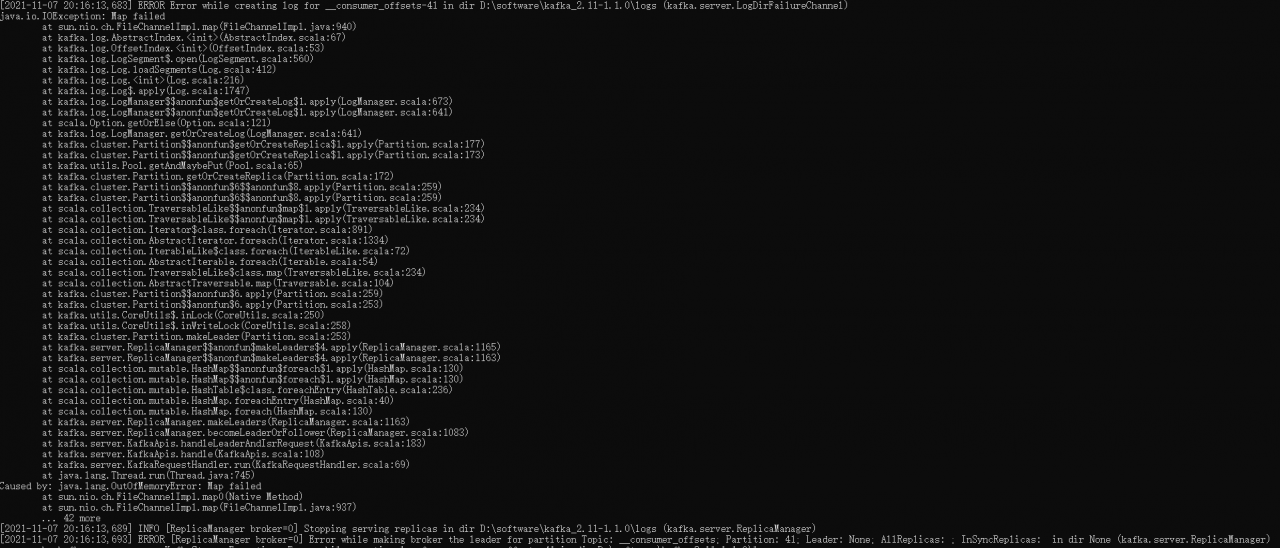

Then I restart Kafka and it will never start again. Check the log of startup failure and report oom. The error contents are as follows:

[2021-11-07 20:16:13,683] ERROR Error while creating log for __consumer_offsets-41 in dir D:\software\kafka_2.11-1.1.0\logs (kafka.server.LogDirFailureChannel)

java.io.IOException: Map failed

at sun.nio.ch.FileChannelImpl.map(FileChannelImpl.java:940)

at kafka.log.AbstractIndex.<init>(AbstractIndex.scala:67)

at kafka.log.OffsetIndex.<init>(OffsetIndex.scala:53)

at kafka.log.LogSegment$.open(LogSegment.scala:560)

at kafka.log.Log.loadSegments(Log.scala:412)

at kafka.log.Log.<init>(Log.scala:216)

at kafka.log.Log$.apply(Log.scala:1747)

at kafka.log.LogManager$$anonfun$getOrCreateLog$1.apply(LogManager.scala:673)

at kafka.log.LogManager$$anonfun$getOrCreateLog$1.apply(LogManager.scala:641)

at scala.Option.getOrElse(Option.scala:121)

at kafka.log.LogManager.getOrCreateLog(LogManager.scala:641)

at kafka.cluster.Partition$$anonfun$getOrCreateReplica$1.apply(Partition.scala:177)

at kafka.cluster.Partition$$anonfun$getOrCreateReplica$1.apply(Partition.scala:173)

at kafka.utils.Pool.getAndMaybePut(Pool.scala:65)

at kafka.cluster.Partition.getOrCreateReplica(Partition.scala:172)

at kafka.cluster.Partition$$anonfun$6$$anonfun$8.apply(Partition.scala:259)

at kafka.cluster.Partition$$anonfun$6$$anonfun$8.apply(Partition.scala:259)

at scala.collection.TraversableLike$$anonfun$map$1.apply(TraversableLike.scala:234)

at scala.collection.TraversableLike$$anonfun$map$1.apply(TraversableLike.scala:234)

at scala.collection.Iterator$class.foreach(Iterator.scala:891)

at scala.collection.AbstractIterator.foreach(Iterator.scala:1334)

at scala.collection.IterableLike$class.foreach(IterableLike.scala:72)

at scala.collection.AbstractIterable.foreach(Iterable.scala:54)

at scala.collection.TraversableLike$class.map(TraversableLike.scala:234)

at scala.collection.AbstractTraversable.map(Traversable.scala:104)

at kafka.cluster.Partition$$anonfun$6.apply(Partition.scala:259)

at kafka.cluster.Partition$$anonfun$6.apply(Partition.scala:253)

at kafka.utils.CoreUtils$.inLock(CoreUtils.scala:250)

at kafka.utils.CoreUtils$.inWriteLock(CoreUtils.scala:258)

at kafka.cluster.Partition.makeLeader(Partition.scala:253)

at kafka.server.ReplicaManager$$anonfun$makeLeaders$4.apply(ReplicaManager.scala:1165)

at kafka.server.ReplicaManager$$anonfun$makeLeaders$4.apply(ReplicaManager.scala:1163)

at scala.collection.mutable.HashMap$$anonfun$foreach$1.apply(HashMap.scala:130)

at scala.collection.mutable.HashMap$$anonfun$foreach$1.apply(HashMap.scala:130)

at scala.collection.mutable.HashTable$class.foreachEntry(HashTable.scala:236)

at scala.collection.mutable.HashMap.foreachEntry(HashMap.scala:40)

at scala.collection.mutable.HashMap.foreach(HashMap.scala:130)

at kafka.server.ReplicaManager.makeLeaders(ReplicaManager.scala:1163)

at kafka.server.ReplicaManager.becomeLeaderOrFollower(ReplicaManager.scala:1083)

at kafka.server.KafkaApis.handleLeaderAndIsrRequest(KafkaApis.scala:183)

at kafka.server.KafkaApis.handle(KafkaApis.scala:108)

at kafka.server.KafkaRequestHandler.run(KafkaRequestHandler.scala:69)

at java.lang.Thread.run(Thread.java:745)

Caused by: java.lang.OutOfMemoryError: Map failed

at sun.nio.ch.FileChannelImpl.map0(Native Method)

at sun.nio.ch.FileChannelImpl.map(FileChannelImpl.java:937)

... 42 more

[2021-11-07 20:16:13,689] INFO [ReplicaManager broker=0] Stopping serving replicas in dir D:\software\kafka_2.11-1.1.0\logs (kafka.server.ReplicaManager)

[2021-11-07 20:16:13,693] ERROR [ReplicaManager broker=0] Error while making broker the leader for partition Topic: __consumer_offsets; Partition: 41; Leader: None; AllReplicas: ; InSyncReplicas: in dir None (kafka.server.ReplicaManager)

org.apache.kafka.common.errors.KafkaStorageException: Error while creating log for __consumer_offsets-41 in dir D:\software\kafka_2.11-1.1.0\logs

Caused by: java.io.IOException: Map failed

at sun.nio.ch.FileChannelImpl.map(FileChannelImpl.java:940)

at kafka.log.AbstractIndex.<init>(AbstractIndex.scala:67)

at kafka.log.OffsetIndex.<init>(OffsetIndex.scala:53)

at kafka.log.LogSegment$.open(LogSegment.scala:560)

at kafka.log.Log.loadSegments(Log.scala:412)

at kafka.log.Log.<init>(Log.scala:216)

at kafka.log.Log$.apply(Log.scala:1747)

at kafka.log.LogManager$$anonfun$getOrCreateLog$1.apply(LogManager.scala:673)

at kafka.log.LogManager$$anonfun$getOrCreateLog$1.apply(LogManager.scala:641)

at scala.Option.getOrElse(Option.scala:121)

at kafka.log.LogManager.getOrCreateLog(LogManager.scala:641)

at kafka.cluster.Partition$$anonfun$getOrCreateReplica$1.apply(Partition.scala:177)

at kafka.cluster.Partition$$anonfun$getOrCreateReplica$1.apply(Partition.scala:173)

at kafka.utils.Pool.getAndMaybePut(Pool.scala:65)

at kafka.cluster.Partition.getOrCreateReplica(Partition.scala:172)

at kafka.cluster.Partition$$anonfun$6$$anonfun$8.apply(Partition.scala:259)

at kafka.cluster.Partition$$anonfun$6$$anonfun$8.apply(Partition.scala:259)

at scala.collection.TraversableLike$$anonfun$map$1.apply(TraversableLike.scala:234)

at scala.collection.TraversableLike$$anonfun$map$1.apply(TraversableLike.scala:234)

at scala.collection.Iterator$class.foreach(Iterator.scala:891)

at scala.collection.AbstractIterator.foreach(Iterator.scala:1334)

at scala.collection.IterableLike$class.foreach(IterableLike.scala:72)

at scala.collection.AbstractIterable.foreach(Iterable.scala:54)

at scala.collection.TraversableLike$class.map(TraversableLike.scala:234)

at scala.collection.AbstractTraversable.map(Traversable.scala:104)

at kafka.cluster.Partition$$anonfun$6.apply(Partition.scala:259)

at kafka.cluster.Partition$$anonfun$6.apply(Partition.scala:253)

at kafka.utils.CoreUtils$.inLock(CoreUtils.scala:250)

at kafka.utils.CoreUtils$.inWriteLock(CoreUtils.scala:258)

at kafka.cluster.Partition.makeLeader(Partition.scala:253)

at kafka.server.ReplicaManager$$anonfun$makeLeaders$4.apply(ReplicaManager.scala:1165)

at kafka.server.ReplicaManager$$anonfun$makeLeaders$4.apply(ReplicaManager.scala:1163)

at scala.collection.mutable.HashMap$$anonfun$foreach$1.apply(HashMap.scala:130)

at scala.collection.mutable.HashMap$$anonfun$foreach$1.apply(HashMap.scala:130)

at scala.collection.mutable.HashTable$class.foreachEntry(HashTable.scala:236)

at scala.collection.mutable.HashMap.foreachEntry(HashMap.scala:40)

at scala.collection.mutable.HashMap.foreach(HashMap.scala:130)

at kafka.server.ReplicaManager.makeLeaders(ReplicaManager.scala:1163)

at kafka.server.ReplicaManager.becomeLeaderOrFollower(ReplicaManager.scala:1083)

at kafka.server.KafkaApis.handleLeaderAndIsrRequest(KafkaApis.scala:183)

at kafka.server.KafkaApis.handle(KafkaApis.scala:108)

at kafka.server.KafkaRequestHandler.run(KafkaRequestHandler.scala:69)

at java.lang.Thread.run(Thread.java:745)

Caused by: java.lang.OutOfMemoryError: Map failed

at sun.nio.ch.FileChannelImpl.map0(Native Method)

at sun.nio.ch.FileChannelImpl.map(FileChannelImpl.java:937)

... 42 more

[2021-11-07 20:16:13,836] ERROR Error while creating log for __consumer_offsets-32 in dir D:\software\kafka_2.11-1.1.0\logs (kafka.server.LogDirFailureChannel)

Resolution process,

First, try to restart Kafka, delete Kafka logs, restart zookeeper, or even shut down and restart windows.

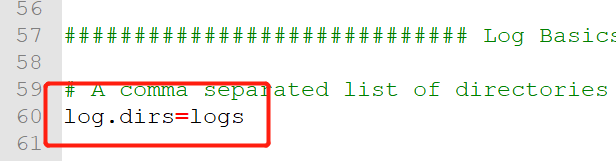

Log path of Kafka:% Kafka_Log. Dirs = logs in home%\config\server.people

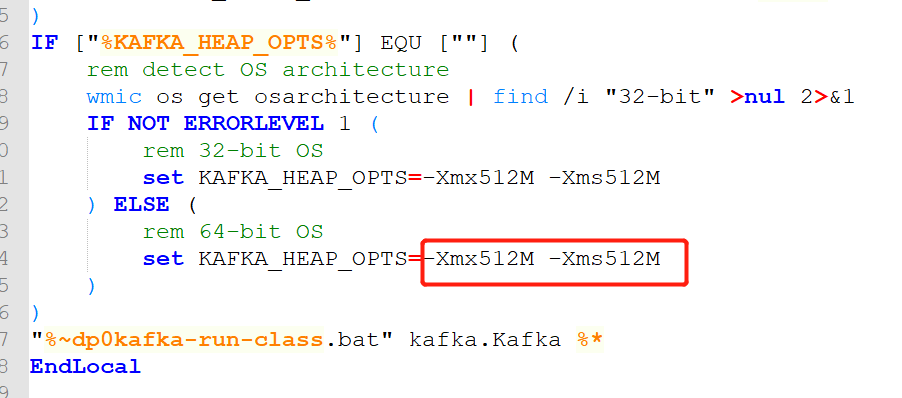

Then, find a solution on the Internet, modify the JVM parameters in kafka-server-start.bat, and change the two 1gs to 512M. As a result, Kafka can start for a while, but hang up immediately.

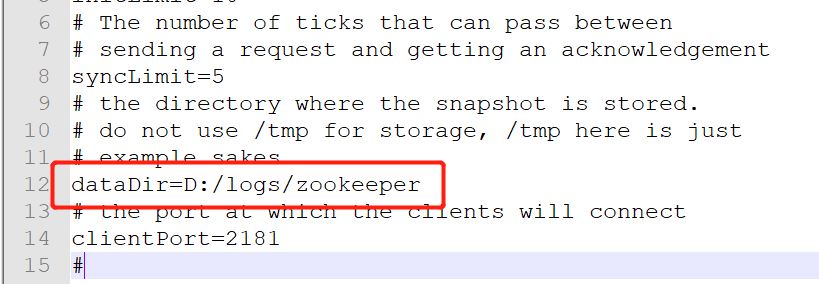

Finally, through observation, it is found that every time Kafka is restarted, a pile of files will be generated under logs (and it is confirmed that I manually deleted them before each startup). It is strange that I don’t know where these data are cached. Finally, delete all the logs under logs in zookeeper.

Log path of zookeeper: dataDir = D:/logs/zookeeper in% zookeeper% conf\zoo.cfg

Conclusion: the main reason for the oom of Kafka at startup is that the data is cached in the dependent zookeeper. feel surprised? feel off-guard?