1. Question:

Failed to start flink project, log exception: java.lang.OutOfMemoryError: unable to create new native thread

2. Solution:

1. Regarding this problem, I guessed at first that it was caused by the message queue (activemq), because it processed more data and the number of threads opened, so a cluster was built for MQ.

MQ cluster construction method: http://blog.csdn.net/jiangxuchen/article/details/8004561

But after setting up the cluster, it was found that there was no use for eggs, and the problem remained.

continue……

2. The next suspicion is that there are too many threads in the system. After optimization, the problem still exists.

continue……

3. Memory tuning, reducing xss value and JVM memory, still cannot be solved.

continue……

4. After several weeks of testing, after sorting out the ideas, I decided that the first task is how to reproduce the problem, so I wrote a test program to test the maximum number of threads that the operating system can create:

1 import java.util.concurrent.CountDownLatch;

2

3 public class TestNativeOutOfMemoryError {

4

5 public static void main(String[] args) {

6

7 for (int i = 0;; i++) {

8 System.out.println(" i = "+ i);

9 new Thread(new HoldThread()).start();

10}

11}

12

13}

14

15 class HoldThread extends Thread {

16 CountDownLatch cdl = new CountDownLatch(1);

17

18 public HoldThread( ) {

19 this.setDaemon(true);

20}

21

22 public void run() {

23 try {

24 cdl.await();

25} catch (InterruptedException e) {

26}

27}

28}

After running:

i = 982

Exception in thread “main” java.lang.OutOfMemoryError: unable to create new native thread

at java.lang.Thread.start0(Native Method)

at java.lang.Thread.start(Thread.java:597)

at TestNativeOutOfMemoryError .main(TestNativeOutOfMemoryError.java:20)

The problem reappeared. After running several times, it was found that the production system can only create more than 980 threads at most. The production system operating system is 64-bit centeros, jdk1.7, and 64G memory. And my local PC computer can create about 2500.

I feel that the reason is almost found, switch to the running account and use the command:

$ su bigdata

$ ulimit -u

$1024

All programs in production are run under the bigdata account, so check that the total number of threads under this account is 950, that is to say, it may exceed 1024 at any time, causing memory overflow. The command to view the number of threads currently running in the process is: pstree -p 5516 | wc -l

Note: 5516 is the PID of TaskManagerRunner

The reason is found, the operating system has a maximum thread limit on the account that runs the program.

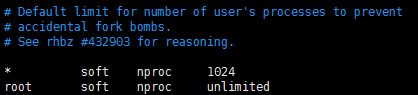

$ vim /etc/security/limits.d/90-nproc.conf

After opening it, I found that except for root, other accounts are limited to 1024.

So add one: bigdata soft nproc 20000

Why is it set to 20000, because after the test, it was found that after running to about 35000, the system reported memory overflow and all commands of the operating system could not be used, so the maximum number of threads of the program was limited to 20000.

No memory overflow error occurred after modification. problem solved.

Three, thinking

1. After summarizing, after encountering a problem, you can’t blindly modify it everywhere. The first thing to do is to reproduce the problem, follow the vine and find out the root cause step by step.

2. Regarding tomcat memory tuning, I personally think that tuning is only needed for medium and large systems or when the server hardware conditions are general.

Similar Posts:

- How to Solve Python RuntimeError: can’t start new thread

- How to solve runtime error r6016

- Java Thread wait, notify and notifyAll Example

- [Solved] CDH6.3.2 Hive on spark Error: is running beyond physical memory limits

- Spark2.x Error: Queue’s AM resource limit exceeded.

- java.net.SocketException: No buffer space available

- eclipse.ini/myeclipse.ini -Xms,-Xmx,-PerSize

- Record a JVM memory overflow java.lang.outofmemoryerror: GC overhead limit exceeded

- malloc: *** error for object pointer being freed [How to Solve]

- Tuning and setting of memory and CPU on yarn cluster