Recently, I’m deploying the web log statistics program. I’ve seen several mainstream open source analysis software on the Internet. I don’t know which one is better. After a search, I’ve got a general understanding of the differences between the three statistics. Different web statistical programs will give different results for different purposes.

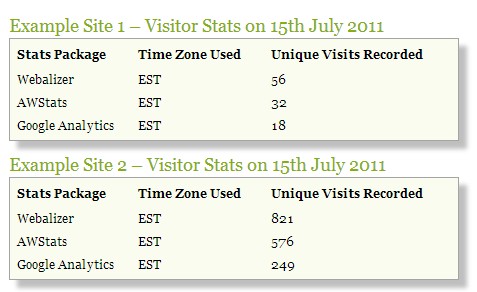

The following is a real statistical case of the three

The three main differences are: Google Analytics collects users’ access information through a piece of code embedded in the page program, while the other two collect users’ information by analyzing the logs on your web server. This shows the difference in data collection. Google Analytics collects data on the user’s browser, and the other two programs collect data on your server. In fact, if you live in different time zones and visit different sites, the three treat ‘days’ differently. Google Analytics mainly depends on the local time of your life, while the other two treat’ days’ differently according to the time on your site server.

Google Analytics

1 Goole statistics relies on embedding a special JS code in each page of your site. It’s very easy to embed a special code in all pages. It can be solved with tools such as CMS, but not everyone thinks it is feasible. Some pages may not have a suitable place to embed Google’s JS statistics code, so when users visit these pages, they can count the number According to the data, there will be errors.

2. Even if all web pages of a site are embedded with statistical code, if the site is slow to load, the Google statistical code may not have a chance to run. For example, the statistical code is deployed at the bottom of the web page.

3. Some users are afraid of malicious JS code on the site. They may refuse part or all of JS code to run on their browser. If your visitors are such users, the statistics will be wrong.

Google statistics relies on cookies to calculate what a visitor has done, such as whether a user is a new visitor or an old visitor. It can also calculate the duration of a visitor’s visit. If the user cleans up the cookies, the Google statistics program will lose this part of the data.

Google stats doesn’t record search engine robots or other crawlers that visit your site. If you want to know which robot visited your site, you have to go to the log on the server to find it.

Google statistics program starts counting 30 minutes after a user visits the page. If you visit a site and it takes 31 minutes for you to go out for lunch, then browsing the same site again will be counted as two visits.

As a site statistics tool, Google analytics can help you find out where users visit you, how they find you, what they do on your site, and so on. With this information, you can optimize your site for market changes. Generally speaking, it is suitable for marketing personnel to make business adjustments according to statistical information.

AWStats

Awstats defines an authorized visitor based on IP address and user agent, and obtains statistics by analyzing your web service logs. For example, some visitors use the browser’s agent (firebox) to visit several pages and have an IP address. At this time, the statistical program thinks that a human has visited the site. However, if the user agent is Google bot, it will reject the data in the statistics.

However, some robots can’t be recognized. Therefore, awstats keeps a database of robots that can be recognized, and the visits of robots are sometimes counted as human visits. Sometimes accessing a site with different IP addresses in the same session will be regarded as multiple access records. If a user visited a page that he visited yesterday or last week, awstats will not measure all his visits.

You can see how much bandwidth robots or crawlers consume on your site and where they come from. It’s useful to find out who’s crawling your site.

4. Awstats does not use cookies statistics. Awstats uses a period of 60 minutes to count the number of visits. If a user visits for 30 minutes and visits again after 35 minutes, it will be regarded as an access record. However, if a user visits for 55 minutes and visits again after 10 minutes, it will be regarded as two visit records.

Awstats is not a market statistics tool, but it can let you understand which is user access and which is robot access. You may find that someone is stealing your pictures, occupying your bandwidth and so on. Awstats is more suitable as an admin tool than a marketing tool.

Webalizer

Webalizer is similar to awstats in that it analyzes the logs on the server and does not try to distinguish between human access and robot access.

Webalizer uses 30 minutes to count user visits instead of 60 minutes in awstats, so more visitors will be recorded.

You can set webalizer to ignore confirmed spiders or robots.

Just like awstats, webalizer is not a tool used by marketers. As an admin tool, webalizer is slightly worse than awstats. The software has been silent since 2002 and has been updated since 2008.

Similar Posts:

- How to Use awk to Analyze Nginx Log

- window.location.href/replace/reload()

- mysqldump unknown table ‘column_statistics’

- SharePoint 2019 solution: Web Parts Maintenance page exception resolution

- [Solved] Exception: Jupyter command `jupyter-notbook` not found.

- What does HTTP status code 304 mean

- How to log off the computer after inactivity

- Access to XMLHttpRequest at ‘http://localhost:9990/’ from origin ‘http://IP:Port’ has been blocked by CORS policy…more-private address space `local`

- Installing iostat in CentOS

- Iframe Cross-port Blocked a frame with origin from accessing a cross-origin frame